41 KiB

Executive Summary

When making my decision to major in Image Processing and Image Synthesis, my

main motivator was my growing curiosity for high performance programming, as I

thought that some of the subjects being taught would lend themselves well to

furthering my understanding of this domain.

On my own, I discovered through a number of conference talks that I watched that

the field of finance provides many interesting challenges that aligned with

those interests.

When the opportunity arose to work for IMC, a leading firm in the world of

market-making, I jumped at the chance to apply to them for my internship. When

asked for the kind of work I wanted to do during that time, I highlighted my

interest in performance, which led to the subject of writing a benchmark

framework for their new exchange connectivity layer, currently in the process of

being created and deployed.

This felt like the perfect subject to learn more about finance, a field I had

been interested for some time at that point, and get exposed to people that are

knowledgeable, to cultivate my budding interest in the field.

During my internship, I was part of the Global Execution team at IMC, a new team that was tasked with working on projects of relevance to multiple desks (each of whom is dedicated to working on a specific exchange). Their first contribution being to write the Execution Gateway, a piece of software that should act as the intermediary between IMC's trading algorithm and external exchanges. This is meant to replace the current pattern of each auto-trader connecting directly to the exchanges to place orders, migrating instead to a central piece of infrastructure to do the translation between internal IMC protocols and decision, and exchange-facing requests.

As part of this migration process, my mission was to work on providing a simple framework that could be used to measure the performance of such a gateway. To do so, we must be able to instrument one under various scenarios meant to mirror real-life conditions, or exercise edge-cases.

This led me to first get acquainted with the components that go into running

the gateway, and what is necessary on the client side to make use of it through

the Execution API, which is in the interface exposed to downstream consumers

of the gateway: the trading algorithms.

Similarly, I learned what the gateway itself needs to be able to communicate

with an exchange, as I would also need to control that side in the framework to

allow measurements of the time taken for an order from the client to the

exchange.

To learn about those dependencies, I studied the existing tests for the

Execution API, which bundle client, gateway, and exchange in a single process

to test their correct behaviour.

Once I got more familiar with each piece of the puzzle, I got to work on writing

the framework itself. This required writing a few modules that would provide

data needed as input by the gateway as a pre-requisite to being able to run

anything. With those done, getting every component running properly was the next

step. With that done, the last part was to generate some dummy load on the

gateway, and collecting its performance measurements. Those could be analyzed

offline, in a more exploratory manner.

With that work done, I had delivered my final product relating to my internship

subject. Taken from a first Proof-Of-Concept to a working end result. Along

the way I learned about the specific needs relating to the usage of those

benchmarks, and integrated them in the greater IMC ecosystem.

With the initial work on the benchmark framework done, my next area of focus was

to add compatibility testing for the gateway. The point of this work was to add,

as part of the Continuous Integration pipeline in use at IMC, tests that would

exercise the actual gateway binaries used in production, to test for both

forward and backward compatibility. This work fits perfectly as a continuation

of what I did to write the benchmark framework: both need to run the gateway and

generate specific scenarios to test its behaviour. This allowed me to reuse most

of the code what I had written for the benchmark, and apply it to writing the

tests.

The need for reliable tests meant that I had to do a lot of ground work to

ensure that they were not flaky, this is probably the part that took the longest

in the process, with some deep investigations to understand some subtle bugs and

behaviours that were exposed by the new tests I was attempting to integrate.

Towards the end of my internship, I presented the work I did on the framework to other developers in the execution teams. This was in part to showcase the work being done by the Global Execution team, and also to participate in the regular knowledge sharing that happens at IMC.

Joining a company during the COVID period of quarantines, working-from-home, and the relatively low amount of face-to-face contact highlighted the need for efficient ways of communicating with my colleagues. Being part of a productive, highly driven team has been a pleasure.

Overall, this internship was a great reaffirmation of my career choices. This was my first experience with an actual project dealing with performance of something actually used in production, and not simply a one-off project to work on and leave aside. Being able to contribute something which will be used long-term, and have impactful consequences, reassures me in the choice of being a software engineer, and the impact of my studies at EPITA.

\newpage

Acknowledgements

Firstly, I would like to thank Jelle Wissink, an engineer from the Global Execution team at IMC. As my mentor, he helped me get acquainted with the technologies used at IMC, guided my explorations of the problems I tackled, and was of great help to solve problems I encountered during my internship. I would also like to thank Erdinc Sevim, the lead of the Global Execution team, for the instructive presentations about trading and IMC's software architecture.

I would also like to thank:

-

Laurent Xu, engineer at IMC: he was the first to tell me about the company, and referred me for an interview. He also welcomed me to Amsterdam and introduced me to other French colleagues.

-

Étienne Renault, a researcher at EPITA's LRDE: he is in charge of the Tiger Compiler project, and taught the ALGOREP (Distributed Algorithm) class during the course of my major. He is one of the most interesting teachers I have met, his classes have always been a joy to attend. I'm glad to have gotten to know him through the Tiger maintainer team.

-

Élodie Puybareau and Guillaume Tochon, researchers at EPITA's LRDE, and head teachers of the IMAGE major. They are great teachers, very involved, and always listening to student feedbacks. They have handled the COVID crisis admirably, taking into account the safety of their students and the workload imposed upon them.

-

The YAKA & ACU teams, a.k.a. the Teaching Assistant teams for EPITA's first year of the engineering cycle: being a TA was a great source of learning for me. It was one of the most fun and memorable experiences I had at the school.

Finally, I would like to thank my parents who have always been there for me, and my girlfriend Sarah for her unwavering support.

Introduction

My internship is about benchmarking the new service being used at IMC for connecting to and communicating with exchanges.

IMC is a technology-driven trading company, specializing in market-making on various exchanges worldwide. Due to this position, they strive for continuous improvement by making use of technology. And in particular, they have to pay special attention to the performance of their trading system across the whole infrastructure.

In the face of continuous improvement of their system, the performance aspect of any upgrade must be kept at the forefront of the mind in order to stay competitive, and rise to a dominant position globally.

My project fits into the migration of IMC's trading algorithms from their individual drivers connecting each of them directly to the exchanges, to a new central service being developed to translate and interface between IMC-internal communication and exchange-facing orders, requests, and notifications.

Given the scale of this change, and how important such a piece of software is in the trading infrastructure of the company, the performance impacts of its introduction and further development must be measured, and its evolution followed closely.

The first part of my internship was about writing a framework to benchmark such a gateway with a dummy load being generated according to scenarios that can simulate varying circumstances. From those runs, it is also in charge of recording the performance measurements that it has gathered from the gateway, allowing for further analysis of a single run and comparison of their evolution as time goes on.

This initial work being finished, I integrated my framework with the tooling in use at IMC to allow for running it more easily, either locally for development purposes or remotely for measurements. This is also used to test for breakage in the Continuous Integration pipeline, to keep the benchmarks runnable as changes are merged into the code base.

Once that was done, I then picked up a user story about compatibility testing: with the way IMC deploys software, we want to ensure that both the gateway and its clients are retro and forward compatible to avoid any surprises in production. This was only ensured at the protocol level when I first worked on this subject, my goal being to add tests using the actual binaries used in production to test their behaviour across various versions, ensuring that they behave identically.

Subject

The first description of my internship project was given to me as:

The project is about benchmarking a new service we're building related to exchange connectivity. It would involve writing a program to generate load on the new service, preparing a test environment and analyzing the performance results. Time permitting might also involve making performance improvements to the services.

To understand this subject, we must start with an explanation of what exchange connectivity means at IMC: it is the layer in IMC's architecture that ensures the connection between internal trading services and external exchanges' own infrastructure and services. It is at this layer that exchange-specific protocols are normalised into IMC's own protocol messages, and vice versa.

Here is the list of tasks that I was expected to complete during this internship:

- Become familiar with the service.

- Write a dummy load generator.

- Benchmark the system under the load.

- Analyze the measurements.

This kind of project is exactly the reason why I was interested in working in finance and trading. It is a field that is focused on achieving the highest performance possible, because being faster is directly tied with making more trades and results in more profits.

The project was therefore perfectly aligned with my interests and skills that I already have, or hoped to work on further.

Context of the subject

Company trade

IMC, as its name suggests, is a market maker. It is specialised in providing liquidity in the market by quoting both sides of the market, and profiting off the trades they make while providing this service.

One key ingredient to this business is latency: due to the competitive nature of the market, we must process the incoming data and execute orders fast enough not to get picked off the market with a bad position.

Service

The exchange connectivity layer must route orders as fast possible, to stay competitive, reduce transaction costs, and lower latencies which could result in lost opportunities, therefore less profits.

It must also take on other duties, due to it being closer to the exchange than the rest of the infrastructure. For example, a trading strategy can register conditional orders with this service: it must monitor the price of product A and X, and if product A's cost rise over X's, then it must start selling product B at price Y.

Strategy

A new exchange connectivity service called the Execution Gateway, and its accompanying Execution API to communicate with it, are being built at IMC. The eventual goal being to migrate all trading strategies to using this gateway to send orders to exchanges. This will allow it to be scaled more appropriately. However, care must be taken to maintain the current performance during the entirety of the migration in order to stay competitive, and the only way to ensure this is to measure it.

Roadmap

With that context, let's review my expected tasks once more, and expand on each of them to get the roadmap:

-

Become familiar with the service: before writing the code for the benchmark, I first needed to understand what goes into the process of a trade at IMC, what is needed from the gateway and from the clients in order to run them and execute orders. There is a lot of code at IMC: having different teams working at the same time on different trading service results in a lot of churn. The global execution team was created to centralise the work on core services that must be provided to the rest of the IMC workforce. The global execution gateway is one such project, aiming to consolidate all trading strategies under one singular method to send orders to their exchanges.

-

Write a dummy load generator: we wanted to send orders under different conditions in order to run multiple scenarios which can model varying cases of execution. Having more data for varying corner cases can make us more confident of the robustness and efficiency of the service. This was especially needed because of the various roles that the gateway must fulfill: not only must it act as a bridge for the communication between exchanges and traders, but also as an order executor. All those cases must be accounted for when writing the different scenarios.

-

Benchmark the system under the load: once we could run those scenarios smoothly we needed to start taking multiple measurements. The main one that IMC is interested in is wire-to-wire latency (abbreviated W2W): the time it takes for a trade to go from a trading strategy to an exchange. The lower this time is, the more occasions there are to make good trades.

-

Analyze the measurements: the global execution team has some initial expectations of the gateway's performance. A divergence on that part could mean that the measurements are flawed in some way, or that the gateway is not performing as expected. Further analysis can be done to look at the difference between median execution time and the 99th percentile, and analyse the tail of the timing distribution: the smaller it is the better. Having a low execution time is necessary, however consistent timing also plays an important role to make sure that an order will actually be executed by the exchange reliably.

Internship positioning among company works

My work was focused on providing a framework to instrument gateways under different scenarios.

Once that framework is built, to be effective it must be integrated in the existing Continuous Integration platform used at IMC. This enables us to track breaking changes and, eventually, be notified of performance regressions. That last part is yet to be done, needing to be integrated with the new change point detection tool currently being developed internally. Once that is done, we can feed the performance results to automatically see when a regression has been introduced into the system.

With the knowledge I gained working on this project, my next task was to add compatibility testing to ensure backward and forward compatibility of the clients and gateways. This meant having to run the existing tests using the actual production binaries of the gateway, and making sure the tests keep working across versions. This is very similar to the way the benchmarks work, and I could reuse most of the tools developed for the framework to that end.

Internship roadmap

Getting acquainted with the code base

The first month was dedicated to familiarizing myself with the vocabulary at IMC, understanding the context surrounding the team I am working in, and learning about the different services that are currently being used in their infrastructure. I had to write a first proof of concept to investigate what, if any, dependencies would be needed to execute the gateway as a stand-alone system for the benchmark. This allowed me to get acquainted with their development process.

After writing that proof of concept, we were now certain that the benchmark was

a feasible project, with very few actual dependencies to be run. The low amount

of external dependencies meant fewer moving parts for the benchmarks, and a

lower amount of components to setup.

For the ones that were needed, I had to write small modules that would model

their behaviour, and be configured as part of the framework, to provide them as

input to the gateway under instrumentation.

The framework

With the exploratory phase done, writing the framework was my next task. The first thing to do was ensuring I could run all the necessary components locally, not accounting for correct behaviour. Once I got the client communicating to the gateway, and the gateway connected with the fake exchange, I wrote a few basic scenarios to ensure that everything was working correctly and reliably.

After writing the basis of the framework and ensuring it was in correct working order, I integrated it with the build tools used by the developers and the Continuous Integration pipeline. This allows running a single command to build and run the benchmark on a local machine, allowing for easier iteration when writing integrating the benchmark framework with a new exchange, and easy testing of regressions during the testing pipeline that are run before merging patches into the code base.

Once this was done, further modifications were done to allow the benchmark to be run using remote machines, with a lab setup specially made to replicate the production environment in a sand-boxed way. This was done in a way to transparently allow either local or remote runs depending on what is desired, without further modification of either the benchmark scenarios or the framework implementation for each exchange.

Under this setup, thanks to a component of the benchmark framework which can be used to record and dump performance data collected and emitted by the gateway, we could take a look at the timings under different scenarios. This showed results close to the expected values, and demonstrated that the framework was a viable way to collect this information.

Compatibility testing

After writing the benchmark framework and integrating it for one exchange, I picked up another story related to testing the Execution API. Before then, all Execution API implementations were tested using what is called the method-based API, using a single process to test its behavior. This method was favored during the transition period to Execution API, essentially being an interface between it and the drivers which connect directly to the exchange: it allowed for lower transition costs while the rest of the Execution API was being built.

This poses two long-term problems:

-

The request-based API, making use of a network protocol and a separate gateway binary, inherently relies on the interaction between a gateway and its clients. None of the tests so far were able to check the behaviour of client and server together using this API. Having a way to test the integration between both components in a repeatable way that is integrated with the Continuous Integration pipeline is valuable to avoid regressions.

-

Some consumers of the request-based API in production are going to be in use for long periods of time without a possibility for upgrades due to conformance testing. To avoid any problem in production, it is of the up most importance that the behavior stays compatible between versions.

To that end, I endeavoured to do the necessary modifications to the current test framework to allow running them with the actual gateway binary. This meant the following:

-

Being able to run them without reliable timings: due to the asynchronous nature of the Execution API, and the use of network communication between the client, gateway, and exchange, some timing expectations from the tests needed to be relaxed.

-

Because we may be running many tests in parallel, we need to avoid any hard-coded port value in the tests, allowing us to simply run them all in parallel without the fear of any cross-talk or interference thanks to this dynamic port discovery.

Once those changes were done, the tests implemented, and some bugs squashed, we could make use of those tests to ensure compatibility not just at the protocol level but up to the observable behaviour.

Documenting my work

With that work done, I now needed to ensure that the relevant knowledge is shared across the team. This work was two-fold:

-

Do a presentation about the benchmark framework: because it only contains the tools necessary as the basis for running benchmarks, other engineers will need to pick it up to write new scenarios, or implement the benchmark for new exchanges. To that end, I presented my work, demonstrated its ease of use and justified some design decisions.

-

How to debug problems in benchmarks and compatibility test runs: due to the unconventional setup required to run those, investigating a problem when running either of them necessitates specific steps and different approaches. To help improve productivity when investigating those, I shared how to replicate the test setup in an easily replicable manner, and explained a few of the methods I have used to debug problems I encountered during their development.

Gantt diagram

@startgantt

ganttscale weekly

Project starts 2021-03-01

-- Team 1 --

[Discovering the codebase] as [Discover] lasts 14 days

then [Proof Of Concept] as [POC] lasts 7 days

then [Model RDS Server] as [RDS] lasts 14 days

then [Framework v0] as [v0] lasts 14 days

then [Refactor to v1] as [v1] lasts 14 days

then [Initial results analysis] as [Analysis] lasts 7 days

then [Dumping internal measurements] as [EventAnalysis] lasts 7 days

then [Benchmark different gateway] as [NNF] lasts 14 days

[Compatibility testing] starts 2021-05-30

note bottom

Fixing bugs

Reducing flakiness

end note

[Compatibility testing] ends 2021-08-15

[Intern week] starts 2021-06-28

note bottom

Learn about option theory

end note

[Intern week] ends 2021-07-04

[Holidays] starts 2021-08-16

[Holidays] ends 2021-09-01

@endgantt

Engineering practices

Delivering a project from scratch to completion

During the course of my internship, I had to deliver a product from its first Proof of Concept to a usable deliverable, going through various iterations along the way.

This process started with me getting familiar with the IMC code base, coming up to speed with the tooling in use, some of the knowledge needed to work on the existing code, etc... Once I was productive, I could start exploring the necessary component needed to write a benchmark using the gateway binaries. This initial POC was written by gutting some existing code in use for the Execution API tests, splitting it into client and exchange code on one side, and gateway code on the other.

From this initial experiment, I got more familiar with the different components that would go into an implementation of the benchmark. With the help of my mentor, I could identify the needed dependencies that would to be provided to the gateway binary in order to instrument it under different scenarios.

I worked on writing those components in a way that was usable for the benchmark, making sure that they were working and tested along the way. One such component was writing a fake version of the RDS that would be populated from the benchmark scenario, which provided the information about financial instruments to the gateway in order to use them in the scenario, e.g: ordering a stock.

I went on to write a first version of the benchmark framework for a specific gateway and a specific exchange: this served as the basis for further iteration after receiving feedback about my design. Writing a second benchmark for a second exchange and gateway led to more re-design.

The basic components of the benchmark framework were useful outside of their original intended purposes, as I could reuse them to write the compatibility tests, which were similar in the way they make use of the gateway to run a scenario and verify the correct behaviour of our system.

With all that work accomplished, I now needed to share my knowledge to make sure that my mentor and I were not the only people who knew that this framework existed, how to use, or how to work with it. To that end, I documented by writing some useful tips I could give to help debug the benchmarks and tests using gateway binaries. I also gave a presentation at the end of my internship to demonstrate how to run a benchmark, and explain the main components of the framework.

I have delivered a complete, featureful product from start to finish, complete with documentation and demonstration of its use. This is a central goal of our schooling at EPITA: making us well-rounded engineers that can deliver their work to completion.

Acquiring new skills and knowledge

IMC is part of the financial tech sector, taking a position of market-maker on a large amount of exchanges worldwide.

The financial sector, even though I was attracted to it by my previous exposure from conference sponsors and blog posts from engineers in the sector, was still unfamiliar to me with when first joining the company.

There is a large amount of vocabulary and knowledge specific to this industry, not even to mention the infrastructure and tooling in use at IMC, which while not specific to this industry in particular, was still foreign to me at that point.

Before starting my internship, I was advised to read a book about high frequency trading, which gave me some context on how exchanges work, and a few important words that are part of the financial vocabulary. In addition, I learned about IMC's trading infrastructure through a number of presentations that my team lead organised with new hires during the beginning of my internship. This gave me more context about what part of the existing infrastructure was aimed to be replaced by the new Execution Gateway and the Execution API. It also taught me about some of the basics of pricing theory, which underpins our whole strategy layer to come up with an appropriate valuation for any product we are interested in trading.

I got to further learn about trading and option theory during a training week organized with a dozen other summer interns: we were taught some of the mathematics that form the basics of valuation reducing risks in trading, the associated vocabulary, and applied them during workshop exercises in trading with the other interns.

On the technical side, not only did I learn about the software stack in use at

IMC, but as I worked on more and more parts of the code base I discovered new

tooling put in place to work and debug parts of our stack that are too costly to

setup or use on any dev computer. One such solution is the fullsim system,

which allows us to simulate our FPGA engines in software, to allow developers to

debug issues with FPGA interaction without having to fiddle with actual FPGA

cards or know how to use them. I also introduced my colleagues to new tools that

they were unaware of, the most prominent being the one I always reach for first

when trying to debug a piece of software: rr, which allows one to record a

program's execution and run it under a debugger in a totally deterministic

manner -- it allows replaying and rewinding execution at will, making it a great

asset when dealing with issues that are sporadic, or require tricky timings like

networked systems.

IMC encourages knowledge sharing across all teams, it permeates the company culture and shows in many ways. An execution engineer is encouraged to learn about trading, which gives us more context when interacting with traders, spotting mistakes in new strategies, or guiding which features would make sense to write next. Catch-up meetings are organized regularly between teams. Presentations are given to teach people about the work that is being accomplished to improve every part of our infrastructure, from deployment tooling, developer productivity, to new strategies or components of our systems.

Thanks to my well-rounded education I felt comfortable being exposed to all this information. But I also felt confident that I fit in from the start, and could keep pace with the information that was fed to me. I am able to pick up those new skills quickly, because EPITA taught us the most important skill of this trade: I learnt how to learn, and how to flourish while doing so.

Illustrated analysis of acquired skills

Working in a team and project management

Delivering a product is the goal of working in a business. However the process to get from zero to final product is unique to every company.

During our project management classes, we were taught various ways of planning a product from before the first line of code to its final delivery. The prevailing culture in our industry in the last decade has been to adopt an agile work environment: evolve the product and its product backlog alongside each other, adapting to changing requirements. This is in sharp contrast to the traditional way of planning projects, called Waterfall, where a specification of behaviour is drawn upfront, and worked on for a long period of time with little to no modification done to that project charter.

We can say that IMC has embraced a more Agile way of delivering new features: the products are continuously being worked on and improved, the work being organized into a backlog of issues, partitioned into epics. And similarly, the company culture embraces a few of the processes associated with Agile programming. The one that has most affected me is the daily stand up, a meeting organized in the morning to interact with the rest of the team, summarising the work that has been accomplished the day before, and what one wishes to work on during the day.

During the times of remote work because of COVID, interactions with the team at large feel more limited than they otherwise would be when working alongside one another at the office. I have learned to communicate better with my colleagues: explaining what I am working on, reaching out to ask questions, and discussing issues with them.

Working in a large code base

IMC has a large body of code written to fulfil their business needs. It is rare to work on a pre-existing code-base during the school curriculum. The few projects that do provide you with a basis of code to complete them are at scales that have nothing to do with what I had to get acclimated to at IMC.

This has multiple ramifications, all linked to the amount of knowledge embedded in the code. It is simply impossible to understand everything in depth, one cannot hold the entirety of the logic that has been written in their head.

Due to that difference, my way of writing software and squashing bugs had to evolve, from an approach that worked on small programs to one that is more scalable: I could not just dive into a problem head-first, trying to understand everything that happens down to every detail, before being able to fix the problem. The amount of minutiae is too large, it would not be productive to try to derive an understanding of the whole application before starting to work on it.

Instead I had to revise my approach, getting surface level understanding of the broad strokes, thinking more carefully about the implications of an introduced change, coming up with a theory and confirming it.

This is only possible because of my prior experience on the large number of projects I had to work on at EPITA, exposing me to a various subjects and sharpening my inductive skills, building my intuition for picking out the important pieces of a puzzle.

Debugging distributed systems

My work specifically centered around running, interacting with, instrumenting, and observing production binaries for use in testing or benchmarking.

Due to this, and because nobody writes perfect code the first time, I have had to inspect and debug various issues between my code and the software I was running under it. That kind of scenario is difficult to inspect, make sense of, and debug. The behaviour is distributed over multiple separate processes, each of which carries its own state.

In a way that is similar to our Distribute Algorithms final project, to tackle those issues I had to think about the states of my processes carefully. I had to reflect on the problem I encountered, trying to reverse-engineer what must have gone wrong to get to that situation, and make further observations to further my understanding of the issue.

This iterative process of chipping away at the problem until the issue becomes self-evident is inherent with working on such systems. One cannot just inspect all the processes at once, and immediately derive what must have happened to them. It feels more akin to detective work, with the usual suspect not being Colonel Mustard in the dining room with the wrench, but instead my own self having forgotten to account for an edge case.

Benefits of the internship

Contributions to the company

The work I have accomplished during my internship has resulted in tools that can be used as the basis for extensive testing using production binaries during the iteration of the development process.

From this work we can retain two main points for IMC:

-

An extensible framework to use for benchmarking the gateways, and measure their performance. Thanks to the ease of writing new scenarios, and the integration of running the benchmarks with the build system in use at IMC, and its Continuous Integration pipeline, it can easily be used to monitor the evolution of performance and watch for regressions. Further down the line, it can be integrated with the change point detection service that is being developed in-house, to simply contact the relevant people when the system detects that a regression has happened: the offending change can be identified more easily that way. This is key to staying competitive, ensuring the latency of our systems remain as low as possible and do not creep upwards.

-

My work on compatibility testing, which is an important step in avoiding any surprising behaviour or downtime in production. Due to the long turnaround time of upgrades in certain regions, and the cost of lost opportunity for any downtime, minimizing the probability of any problem that could be experienced results directly in more profits being made.

Furthering my learning

During my internship, I got to work on a large code base, interact with smart and knowledgeable colleagues, and tinker on what constitutes the basic bricks of IMC's production software.

Working at IMC was my first experience with such a large code base, a dizzying amount of code. It is impossible to wrap your head around everything that is happening in a given program. Up until that point I had only encountered school projects, of relatively small size and whose behaviour could easily be understood. Dealing with problems by trying to understand everything that is happening in a program is a valid strategy for those. It is not, however, a scalable way of working on software, and I needed to change my way of thinking about and dealing with the problems I encountered during my work. To cope with that, isolate the actual source of the problem, instead of trying to understand the whole system around it.

Interacting with the team was a great help in that endeavour. Knowing who to ask questions to, and learning how to ask relevant questions are once again essential in achieving productivity in those circumstances. This is doubly true in times of remote working, when turning around and asking your colleague a question is not so simple. I had trouble at first to actively use the internal messaging app to ask questions, and was encouraged to ask questions liberally instead of staying stuck on my own.

Conclusion

Education and career objectives

I chose to major in Image Processing and Image Synthesis for multiple reasons, notably my interest in high performance programming, and thought that this major would lend itself well to it. This proved to be true, although more so due to applying it to the projects that we were given rather than the courses we were taught (except for a few which specifically focused on it).

Through watching conference presentations, I learned about the field of finance and thought it would provide interesting challenges that aligned with my interests. This motivated my choice to intern at IMC, even though their business is far removed from the core teachings of my major. This too, proved to be true, and I'm glad to see my initial hunch panning out the way it has.

Improving the major

Having more focus on measuring results and performances of our projects would be an interesting idea, to put it into context for the major, the need for real time image analysis and other such constraints means that having the skills to measure and improve our code can be a necessary part of working in the industry.

The one class that stands out to me as having this issue front and center is the GPGPU course, introducing us to massively parallel programming on a graphics card. However, we were mostly left to our own devices to figure out effective ways to measure and analyse those results. Providing more guidance would be a productive endeavor, ensuring that the students are provided with the correct tool set to deal with those problems.

Introspection

Working abroad, with the additional COVID restrictions, is a harsh transition from the routine of school. However, both the company and the team have made it easy to adjust.

-

The daily stand-up meeting and weekly retrospective seem more important than ever when you can potentially not talk to your colleagues for days due to working-from-home.

-

IMC is very proactive in organising regular events for their employees. This is a great way to feel more engaged during such a period. They also organised a week of training once the other interns had joined, which created a broader network of relationships in a foreign city.

-

My mentor encouraged me to ask as many questions as I could when I first started my internship, and I attended to some presentations which gave additional context about the work being done by the team. This was helpful in getting over the fact of feeling overwhelmed when first getting acquainted with the code and technology being developed and used.

-

The gradual transition to return to the office, allowing me to arrange one day a week to work next to my mentor, led to more one-on-one interaction which feel more productive than the usual textual interactions.

Career evolution

This internship was everything I expected and more. The people are great, the company is thriving, the work environment outstanding.

The fintech sector is full of interesting problems to me. I loved learning about the basic theory of trading, what constitutes the basis for our algorithms' decisions. I had a great deal of enjoyment working on my projects during the internship, despite the few moments of frustration that come from working on a distributed system.

Working at IMC, you are surrounded by smart and hard-working engineers, and

encouraged to interact with everybody to spread knowledge. Their focus on

continual improvement means that you are always learning and making yourself

better. Furthermore, they take good care of their employees, the mood is that of

a focused, casual, and playful atmosphere.

All in all, I think that IMC is great place to work at, there are few companies

like it. This will have an impact in how I rate potential future employers, as I

expect few places to be as well-rounded as IMC.

Appendix

Vocabulary

- Bid and ask

-

Respectively the price for buying and selling a stock or other financial instrument. The closer the spread of the two prices is, the more liquidity there is in the market for that product.

- Continuous Integration

-

The practice of automating the integration of code from multiple contributors into a single software project.

- Market-making

-

A market-maker provides liquidity to the market by continuously quoting both sell and trade prices on the market, hoping to make a profit on the bid-ask spread.

About IMC

International Marketmakers Combinations (IMC) was founded in 1989 in Amsterdam, by two traders working on the floor of the Amsterdam Equity Options Exchange. At the time trading was executed on the exchange floor by traders manually calculating the price to buy or sell. IMC was ahead of its time, being among the first to understand the important role that technology and innovation would play in the evolution of market-making. This innovative culture still drives IMC 30 years later.

Since then, they've expanded to multiple continents, with offices operating in Chicago, Amsterdam, and Sydney. Their key insight for trading is based on data and algorithms, making use of their execution platform to provide liquidity to financial markets globally.

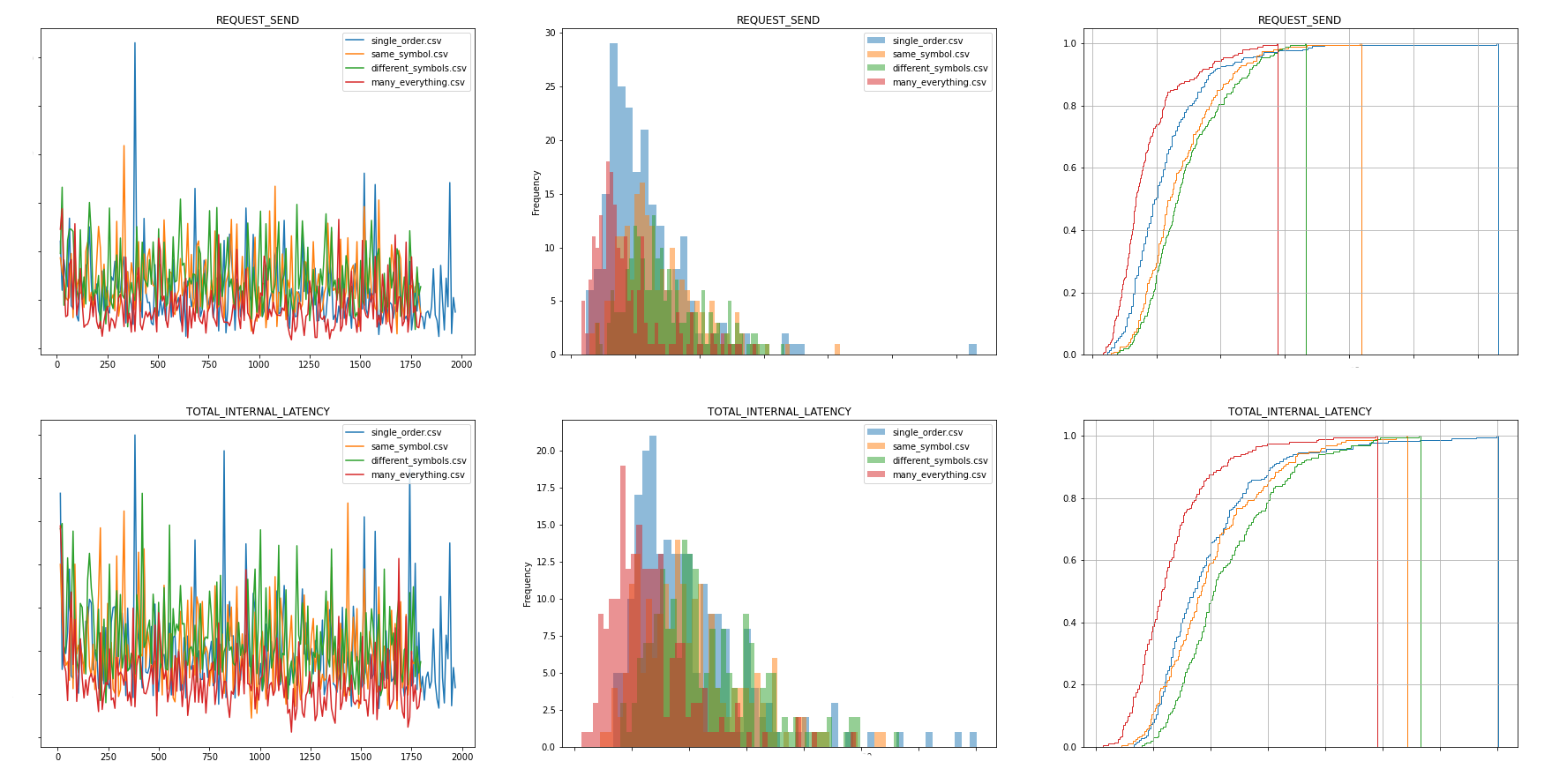

Results & Comments

The figure above showcases a few sample results that can be obtained by using the benchmark framework. The gateway keeps track of some internal events and their timings, and reports them back to the benchmark which will dump the data and allow for further analysis.